Aim:

The aim of this project is to enhance the ability to distinguish between AI-generated and human-authored text by utilizing a fine-tuned BERT classifier. This approach emphasizes contextual understanding and deep language representation to outperform traditional machine learning systems in identifying AI-generated content.

Abstract:

With the rapid rise of generative AI models like ChatGPT, distinguishing between AI-generated and human-written text has become an increasingly critical challenge in domains such as academia, journalism, and content authenticity verification. As these models grow more sophisticated, traditional detection methods struggle to keep pace. The line between human creativity and machine generation is becoming blurred, posing risks to originality, trust, and ethical use of AI technologies. This project addresses the growing need for accurate and context-aware AI text detection by introducing a BERT-based classification approach. By leveraging the BERT tokenizer and fine-tuning a pre-trained BERT model for binary classification, the system captures deep contextual relationships in text that conventional models often overlook. While the existing benchmark system used traditional machine learning techniques with vectorization strategies like TF-IDF and achieved promising results, our work enhances this foundation by introducing an end-to-end transformer-based model for improved accuracy, reliability, and linguistic understanding.

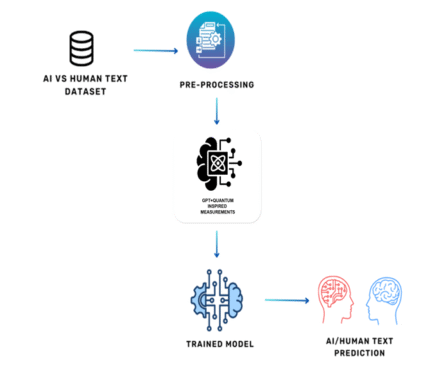

Proposed System:

Our proposed system introduces a fine-tuned transformer-based model using BERT for both tokenization and classification. The input text is first processed using BERT’s tokenizer, which produces encodings. These encoded representations are passed to a pre-trained BERT model, and is used for binary classification. The entire model is fine-tuned on the labeled dataset, learning to distinguish between AI and human writing. This enhances accuracy, reduces preprocessing(NLP) overhead, and makes the model more robust to adversarial or paraphrased AI content.

Advantage:

The use of a fine-tuned BERT model offers significant advantages over traditional methods. It enables end-to-end processing from raw text to classification, removing the need for separate vectorization or feature engineering. BERT’s contextual embeddings capture the intricacies of language usage, idioms, tone, and semantics, allowing the model to detect subtle signs of artificial generation. This results in improved detection accuracy and better generalization to new, unseen data. Furthermore, BERT’s widespread adoption and available tools for explainability make the system scalable and transparent.

Reviews

There are no reviews yet.