Aim:

The aim of the document project is to develop an automated shoeprint image retrieval system for forensic investigation. The system focuses on matching crime-scene shoeprints with reference images using handcrafted visual features. It seeks to improve retrieval accuracy through rank-level fusion of multiple descriptors.

Abstract:

This project presents a rank-fusion-based shoeprint image retrieval framework for forensic applications. The system extracts holistic, local texture, and key point-based features from shoeprint images. Individual similarity scores are combined using weighted rank fusion to improve matching reliability. A neighbourhood graph similarity measure is incorporated to enhance rank consistency. Experimental results demonstrate the effectiveness of the approach on controlled shoeprint datasets.

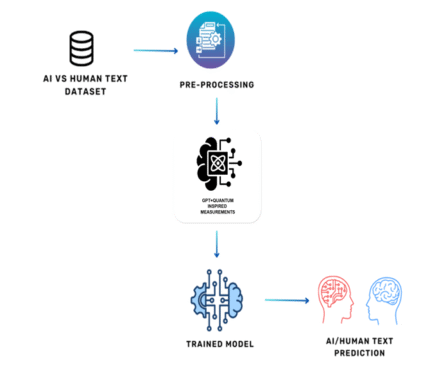

Proposed System:

The proposed system introduces a hybrid deep-feature-based shoeprint retrieval framework. Robust grayscale pre-processing preserves texture while suppressing noise. Multi-scale CNN features are extracted using a pretrained ResNet-50 to capture high-level shape and texture information. Root SIFT descriptors with ratio testing and RANSAC-based geometric verification model structural consistency. FAISS is employed for efficient large-scale candidate retrieval. An adaptive fusion strategy dynamically balances CNN and SIFT scores per query. Reranking is applied to enforce local geometric consistency. The system supports scalable, reusable database construction. Detailed evaluation metrics such as Top-1, Top-5, Top-10, and Top-20 accuracy are computed.

Advantage:

- The proposed system is robust to noise, partial prints, and illumination changes.

- Deep CNN features provide strong discriminative capability absent in handcrafted methods.

- Geometric verification significantly reduces false matches.

- Adaptive fusion outperforms static rank fusion by adjusting to query reliability. The system scales efficiently and is suitable for real forensic deployment

Reviews

There are no reviews yet.