Aim:

This study aims to improve the accuracy of spam email detection by leveraging the advanced contextual capabilities of the DistilBERT model for text classification.

Abstract:

Spam emails continue to pose significant challenges in the digital landscape, impacting individuals and organizations alike. Traditional methods for spam detection often fail to account for the complex and evolving nature of spam content. This research proposes a framework that uses the DistilBERT model—a lightweight and efficient version of BERT—for spam email classification. By utilizing DistilBERT’s ability to understand the context and semantics of language while reducing computational overhead, the system achieves high accuracy in identifying spam and legitimate emails. The proposed model demonstrates improved performance and efficiency over traditional techniques, offering a robust, scalable, and resource-friendly solution for spam detection.

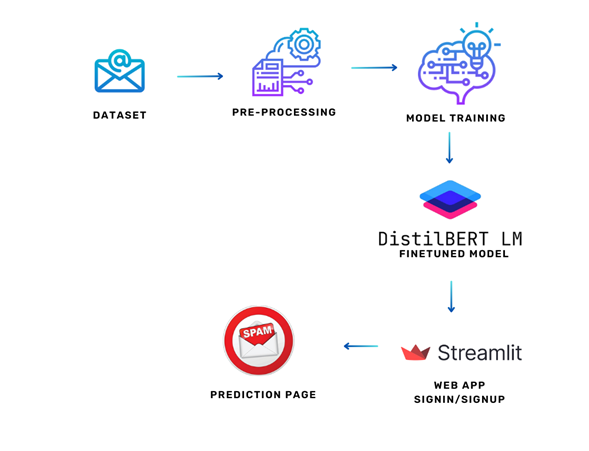

Proposed Method:

This study proposes the use of the DistilBERT model for spam email detection. DistilBERT is a transformer-based model that offers nearly the same language understanding capabilities as BERT, while being smaller, faster, and lighter. It processes email text to capture contextual and semantic relationships, enhancing classification accuracy without significant computational cost. The model is fine-tuned on a labeled spam email dataset, optimizing parameters such as learning rate and batch size for improved performance. DistilBERT handles entire sentences, enabling deeper insights into spam patterns than feature-based or sequential models.

Reviews

There are no reviews yet.